Schema extensions enable to store extended custom data directly to objects in Azure AD. This article describes how to access data we defined and added in Introducing user schema extensions in Delegate365 with the Microsoft Graph PowerShell module.

Prerequisites

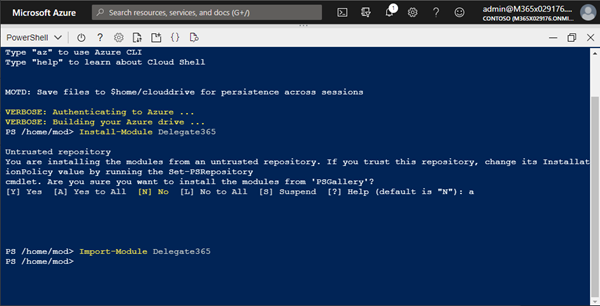

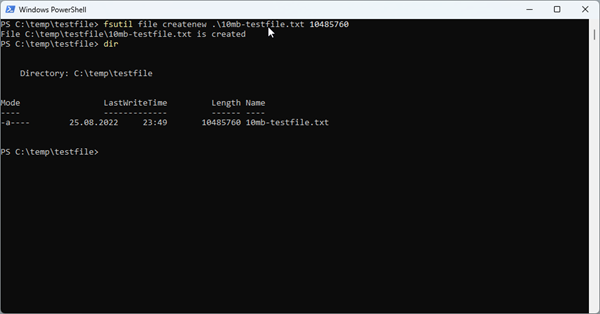

To work with Microsoft Graph and PowerShell, we need to install the Microsoft.Graph module as described at Install the Microsoft Graph PowerShell SDK. You can install the module in PowerShell Core or Windows PowerShell using the following command.

# https://docs.microsoft.com/en-us/graph/powershell/installation

Install-Module Microsoft.Graph -Scope CurrentUser

If you already have the module installed, check the version (currently, the latest version is '1.3.1'):

Get-InstalledModule Microsoft.Graph

To update to the latest version, run:

Update-Module Microsoft.Graph -Force

If you want to completely uninstall the Microsoft.Graph module, run Uninstall-Module Microsoft.Graph.

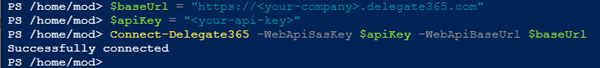

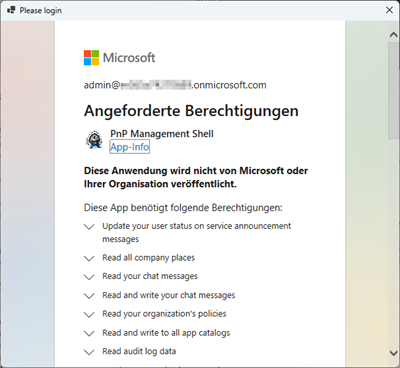

Connect to Graph

Once installed, we can use the Connect-MgGraph to authenticate with a user and device login to access data in the M365 tenant. The scopes define the permissions we need in our script. In this sample, we want to access User data (and eventually) Group data.

Import-Module Microsoft.Graph

# Select-MgProfile -Name "beta"

Connect-MgGraph -TenantId '<tenantname>.onmicrosoft.com' `

-Scopes "User.ReadWrite.All","Group.ReadWrite.All"

Get-MgContext

Sign-in and copy the device code into the browser page. When you close the browser, Connect should welcome you: Welcome To Microsoft Graph!

We can check the connection with Get-MgContext. The output should look as here.

Get-MgContextClientId : <someid>

TenantId : <tenantid>

CertificateThumbprint :

Scopes : {User.ReadWrite.All, Group.ReadWrite.All…}

AuthType : Delegated

CertificateName :

Account : admin@<tenantname>.onmicrosoft.com

AppName : Microsoft Graph PowerShell

ContextScope : CurrentUser

Certificate :

Looks good. Now we are ready to access the schema properties.

Get all existing schema extensions

To see how to work and how to get Schema Extensions, check out the documentation at Get schemaExtension. To get all schema extensions in the tenant with PowerShell, we can use the Get-MgSchemaExtension cmdlet with the -All parameter.

Get-MgSchemaExtension -All

We get a long list. It seems, Microsoft is using schema extension extensively. To see the properties delivered, we analyze the first element:

$SchemaExtensionList[0] | fl

Description : sample desccription

Id : adatumisv_exo2

Owner : 617720dc-85fc-45d7-a187-cee75eaf239e

Properties : {p1, p2}

Status : Available

TargetTypes : {Message}

Keys : {}

Values : {}

AdditionalProperties : {}

Count : 0

The relevant properties are, of course, the Id, the Owner, the Properties and the Status. The status can be "InDevelopment", or "Available". When set to "Available", the properties can no longer be extended. In Delegate365, we use the Status "InDevelopment" to be more flexible.

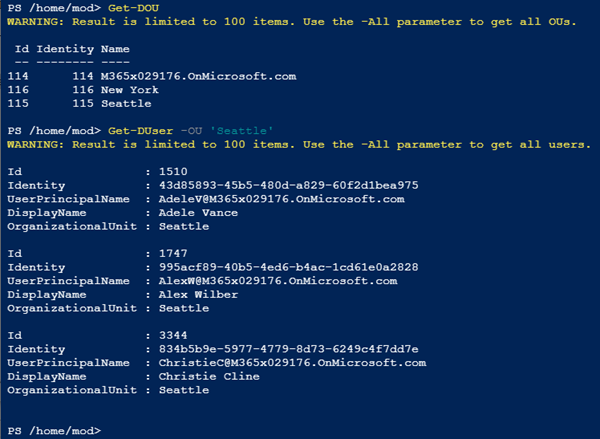

Get specific existing schema extensions

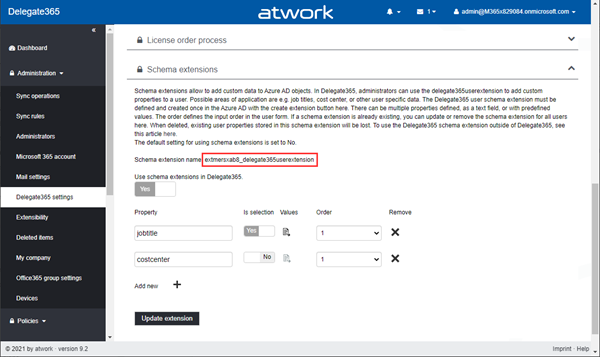

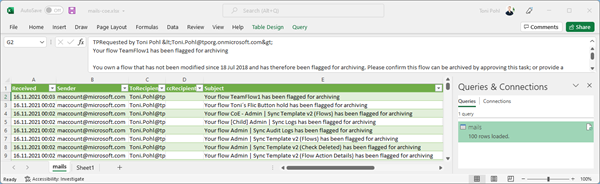

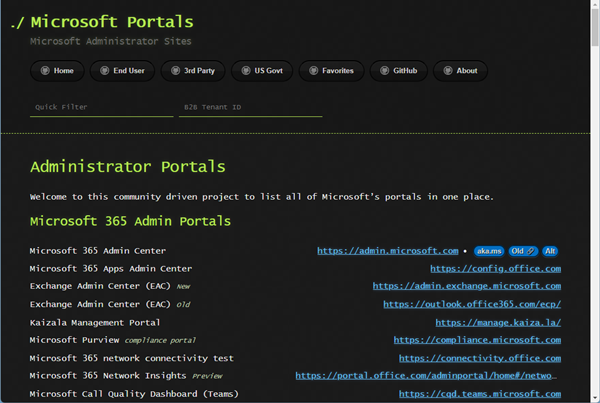

To get only our own schema extensions, we need the App Id that owns the custom schema extension OR the name of the extension. In Delegate365, we can open the Delegate365 settings and get the schema extension name in the Schema Extensions section as here.

The schema extension name always ends with "delegate365userextension". Azure AD creates a random prefix for the name. In this sample, the generated name is "extmersxab8_delegate365userextension".

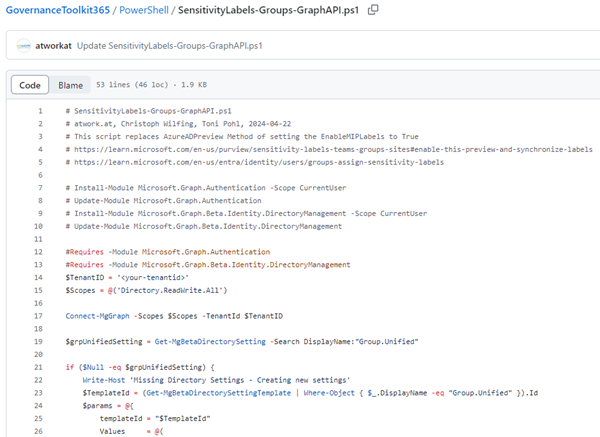

Unfortunately, the Graph (still) does not have an OData function to search with contains or endswith. Also, the cmdlet Get-MgSchemaExtension has a -Filter option - but that does not work! However, we can get all the schema extensions and filter for our own extension ourselves. So, let´s use our object and filter for our extension - adapt the search as needed.

$myext = Get-MgSchemaExtension -All | ? id -like '*_delegate365userextension'

$myext

Id Description Properties TargetTypes Status Owner

-- ----------- ---------- ----------- ------ -----

extmersxab8_delegate365userextension delegate365userextension {jobtitle, costcenter, favcolor} {User} InDevelopment a3f620a2-8418-44b6-9847-aa3db8cd37db

ext7ztddysl_delegate365userextension delegate365userextension {jobtitle} {User} InDevelopment a3f620a2-8418-44b6-9847-aa3db8cd37db

extvndtvtlr_delegate365userextension delegate365userextension {jobtitle} {User} InDevelopment a3f620a2-8418-44b6-9847-aa3db8cd37db

We see that our Delegate365 schema extension "extmersxab8_delegate365userextension" with it´s properties. The command also shows us the "owner". Here the owner is the Delegate365 app with the App Id "a3f620a2-8418-44b6-9847-aa3db8cd37db". Only the owner is allowed to modify the schema extension.

Side story when working with Schema Extensions in Graph: Currently, one application (in our case, the Delegate365 app) can only own up to 5 schema extensions. As of today, the Microsoft Graph API seems to have an issue here: If a new schema extension is created, it internally creates 2 or 3 schema extensions with unique names, but with the same initial properties (as we see the 3 lines above). The multiple extensions are connected: All schema extensions deliver the same data. In Delegate365, we use only the first (and created) schema extension by name. The remaining 2 are unused and cannot be deleted - another (corresponding) bug in the Microsoft Graph API. This leaves (5 - 3 =) only 2 more possible schema extensions for an app. When the next new schema extension is created, it can happen that 2 extensions are created... To make it short: The Graph API has some reproducible issues and the Delegate365 schema extension should not be removed in any case to ensure the properties can be extended in Delegate365 if needed. Therefore, removing an existing user schema extension in Delegate365 is not available. Also, the Delegate365 setup has been renewed to ensure that the Delegate365 app is never deleted and all secrets and certificates are renewed properly, when a new Delegate365 setup is executed. However, user schema extensions in Delegate365 are useful and Delegate365 works around that Graph issue.

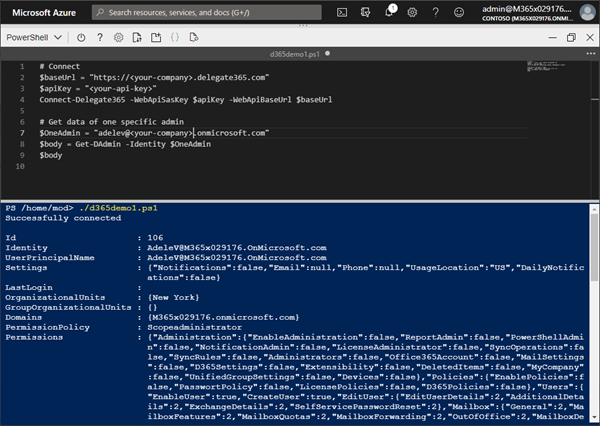

Get users with specific data

A typical use case is to get a list of all users with a specific value set in a schema extension. For example, we want to get all users who have the favcolor set to "red", or all users who have the cost center set to "1200" or similar.

Unfortunately, there is currently no ready-to-use cmdlet available for that. So, we need to accomplish this with a Graph REST call, with a query running Invoke-MgGraphRequest (using the authentication from before) as here:

$Url = 'https://graph.microsoft.com/v1.0/users?$filter=startswith(extmersxab8_delegate365userextension/favcolor, ''red'')&$select=id,displayname&$top=2'$result = Invoke-MgGraphRequest -Method GET -Uri $Url

The syntax for the property query is <schemaextensionname>/<propertyname> (it took some time to figure this out). The request above shows the syntax. This returns a result with all (well, the first two) users who have the property favcolor starting with "red". Note that Graph only delivers up to 100 items per page. So, we have to take care and process additional data ourselves if needed, see below.

Process the result

First, let´s check the result. This is … clumsy, as we see when we output the $result.value from the operation above.

$result.valueName Value

---- -----

id <userid>

displayName Adele Vance

@odata.id https://graph.microsoft.com/v2/<someid>/directoryObjects/<userid>/Microsoft.Dire...

id <userid>

displayName Bianca Pisani

@odata.id https://graph.microsoft.com/v2/<someid>/directoryObjects/<userid>/Microsoft.Dire...

We get a a hash table, including a hash table for each item (including the selected properties). Really? So, we need to split the data to work with the corresponding users… Also, what if we get more pages of users?

Filter and loop through all users

After we initially loaded the first page, we can follow the data in the odata.nextLink property. See more at Paging Microsoft Graph data in your app. To make it short, here´s the solution for getting all filtered users for the query to continue to work with the user id for additional steps.

# Define the first request:

$Url = 'https://graph.microsoft.com/v1.0/users?$filter=startswith(extmersxab8_delegate365userextension/favcolor, ''red'')&$select=id,displayname&$top=2'

do {

$page = Invoke-MgGraphRequest -Method GET -Uri $Url

# Returns a hash table, including a hash table for each item....!

# We are only interested in the "id" and displayname property$page.value | ForEach-Object { Write-Host $_['id'] $_['displayname'] }

# Check if there are following pages

if ($page.'@odata.nextLink') {

Write-Host 'Loading next page…'

$Url = $page.'@odata.nextLink'

} else {

Write-Host 'We got everything!'

break

}

} while ($true)

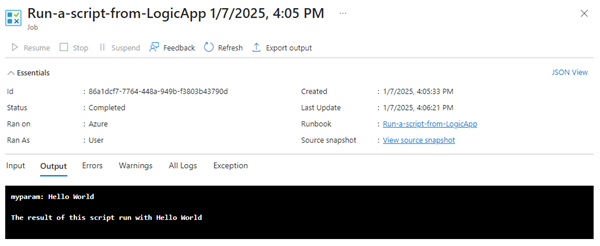

When we run this script, we loop through all filtered users with the page size of 2. When no more data is returned, we´re done and end the forever-loop. As output we should get all users with their id and their Displayname:

bef4b76a-289a-43bc-bad4-84ee0c5f1c12 Debra Berger

30ad21be-3f2c-4c39-87c4-fad4784cb22d Adele Vance

Loading next page…

d92c0583-4b8e-4979-abd3-be0fd7c6fa3b Bianca Pisani

We got everything!

We can now continue to work with that data and do something with the users in the ForEach-Object { <do-something> } block. Eventually, we would like to add these users to a group, send emails to these users or do similar actions.

Summary

This article demonstrates the current status of working with Azure AD schema extensions with Microsoft.Graph PowerShell. You can download the script shown above in the Delegate365 GitHub Samples here. A sample for running such a script in an Azure Automation Account and synchronizing filtered users to an email enabled security group will be added in this repository shortly. We hope this step-by-step instructions helps to automate user specific processes and to customize tasks based on custom user data.

See also:

- Working with Azure AD schema extensions and Microsoft Graph

- Introducing user schema extensions in Delegate365

Happy managing your M365 tenant with Delegate365 and happy scripting!